Site Search explained

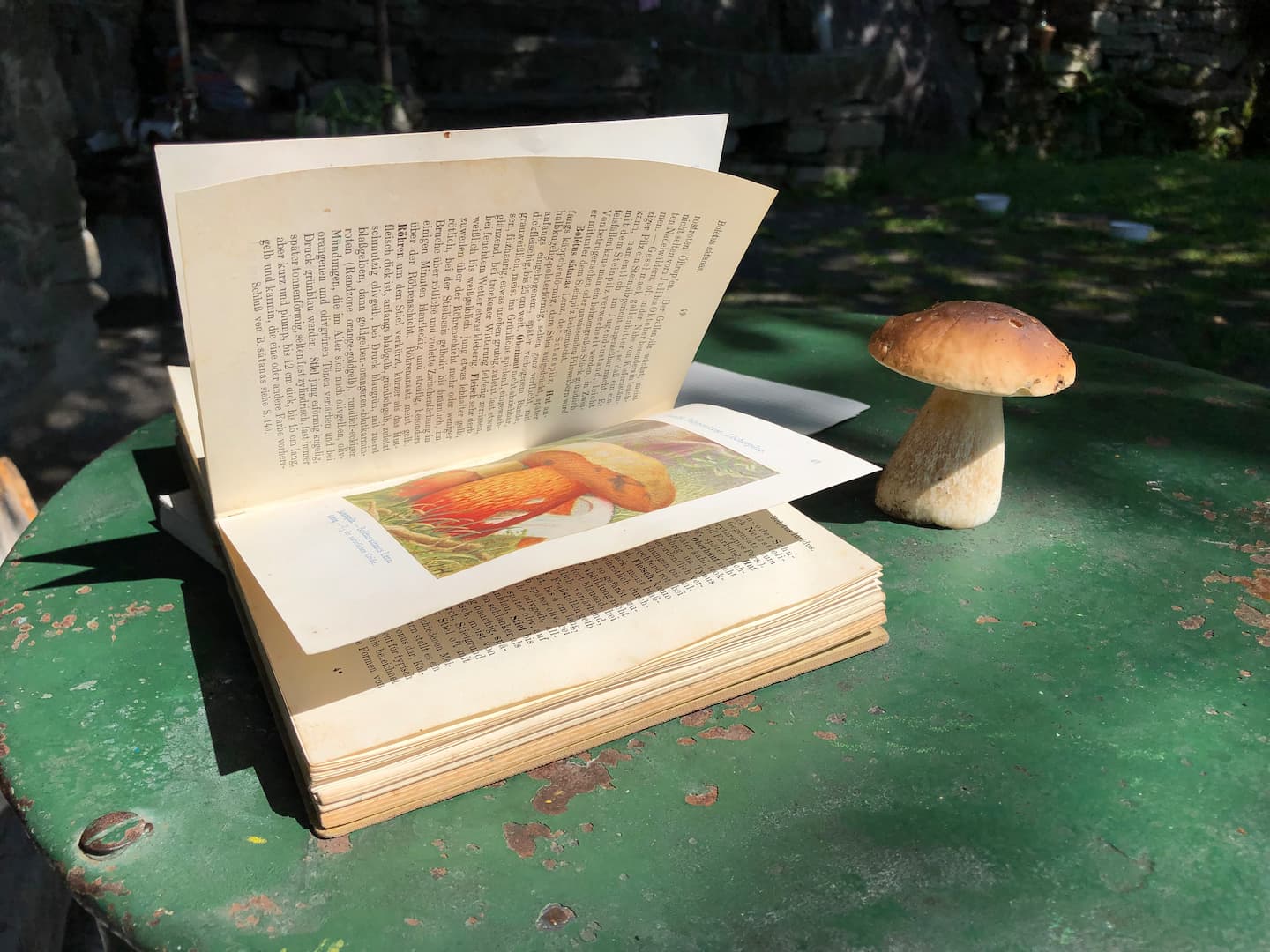

Working with search for a website is fun. It is a bit like collecting mushrooms from the wild. Same three steps: collect, improve, serve.

You go into the woods to collect them, which can be full of relaxation and anticipation. You take what you find home and prepare for conservation. And in the third phase you get to eat what was in store. All three phases are important and can be professional fun or pain. Let me take you through the steps.

Collecting the data

You need to go out into the data space, which in this story is a website, to collect the data you want to index. This typically is done by a (ro)bot or crawler that starts somewhere on your indication and follows the links to discover the space and pick up the data. Another possibility is to use an API to collect all the information from the backend of your website.

Picture a forest and you need to find the mushrooms. Sometimes it’s more like harvesting, and sometimes it is more like hard work. Ditches, slippery branches, wild animals, insects. It all depends on how well-structured the pages are and if they are properly connected. In this first phase, you typically give instructions and limits to the crawler. Start search here and here, don’t go beyond the forest or a certain path, don’t pick up blue mushrooms, watch the bears and blow the horn once done.

Preparing and improving the data

Once you have retrieved the data, you can enrich it. Let’s say you took the mushrooms home, you want to clean them up (some with water, other with a brush), sort them a bit (insects) and maybe get rid of some you might suspect to be poisonous. Preparing the data you have might involve a number of processes. One is that you can work with semantics. You can use synonyms and hypernyms to give more meaning to what you have. You can check for words that belong together and mark them as such. It might be necessary to split data based on the language it is in. And then there are tasks that might be very specific for the recipe you have in mind. Product names, people, categories might need to be marked.

Once all this work on the data is done, it is put in an index for fast retrieval. A bit like the index on a book.

Find results

The third step is to offer a search box and page to do the actual searching. In the simplest variant, it is just entering search words and see a list of results. But you can go much further, and there are a number of possibilities to improve the experience of searching. You might want to have filters or facets, autosuggest, spelling corrections, highlight of results in context, recommendations or push certain results you deem more valuable. Also, here Natural Language Processing (NLP) might come in, and the person searching can type in daily language to search.

Basically this third step is the goal and if the first two are done properly the visitor should be able to get what is necessary and maybe even be inspired to look further because of recommendations. It depends on how well the interactions are shown and working, if there is an enticing User Experience.

The site search on this website

In the past, I mostly worked with Google Search Application and a solution based on Elasticsearch. For this website, I started with an API version that came with the template I use (Liebling for Ghost). That extracted data on articles and offered a simple search. This turned out not to be effective and sometimes plainly wrong. Plus, it was also not full text search.

So I searched around and gave the free version of search engine SiteSearch 360 a spin. They crawled my site and all articles full text and with some iterations the crawler found them all and put the data in an index. Then I was able to use a dictionary and some other tricks to make the data even more useful.

In order to serve up a search box and page, they provided me with an easy to implement solution. Now the search shows dynamic results and with a nice preview of the pages found. In my account, I can see some basic and valuable analytics of where visitors searched for and if they were successful.

So for now I loved it! Really well done and very extensive.

Further reading on Site Search

Algolia is a provider of site search capabilities and can be considered a market leader. Ivana Ivanovic, Senior Content Strategist of Algolia, wrote this guide on site search.